Agent-native infrastructure

for production AI

Define agents as code. Deploy with a single command. Astro AI handles models, knowledge bases, tool integrations, and observability so you build what matters.

Everything agents need to run

Purpose-built infrastructure primitives, not repurposed web hosting.

Models

Attach any LLM (OpenAI, Anthropic, open-source) declaratively. Swap providers without touching code.

Knowledge bases

Vector-indexed knowledge stores with automatic ingestion and chunking. Your agents stay grounded.

Tool integrations

Connect APIs, databases, and SaaS tools. Agents call them natively with built-in auth and retries.

Observability

Traces, metrics, and logs out of the box. See every decision your agent makes in production.

Guardrails & secure isolation

Apply deterministic rules to constrain agent input and output.

Auto-scaling

From zero to thousands of concurrent sessions. Infrastructure that responds to demand, not configuration.

Declarative by design

The Astro AI spec (astropods.yml) is your harness—models, knowledge stores, tools, and observability defined in one file.

- Version-controlled agent topology

- Environment-aware configuration

- Reproducible builds across teams

- GitOps-ready deployment pipeline

spec: package/v1

name: support-agent

agent:

image: astro/support-agent:latest

interfaces:

frontend: true

messaging: true

models:

main:

provider: openai

models: [gpt-4o, gpt-3.5-turbo]

backup:

provider: ollama

models: [llama3.2]

rails:

provider: openai

model: gpt-3.5-turbo-instruct

knowledge:

docs:

provider: qdrant

persistent: true

vector:

provider: pinecone

integrations:

github:

provider: github

dev:

command: bun --watch run mastra

Three commands to production

Shared knowledge sources and consistent deployments mean you experiment and ship to production faster.

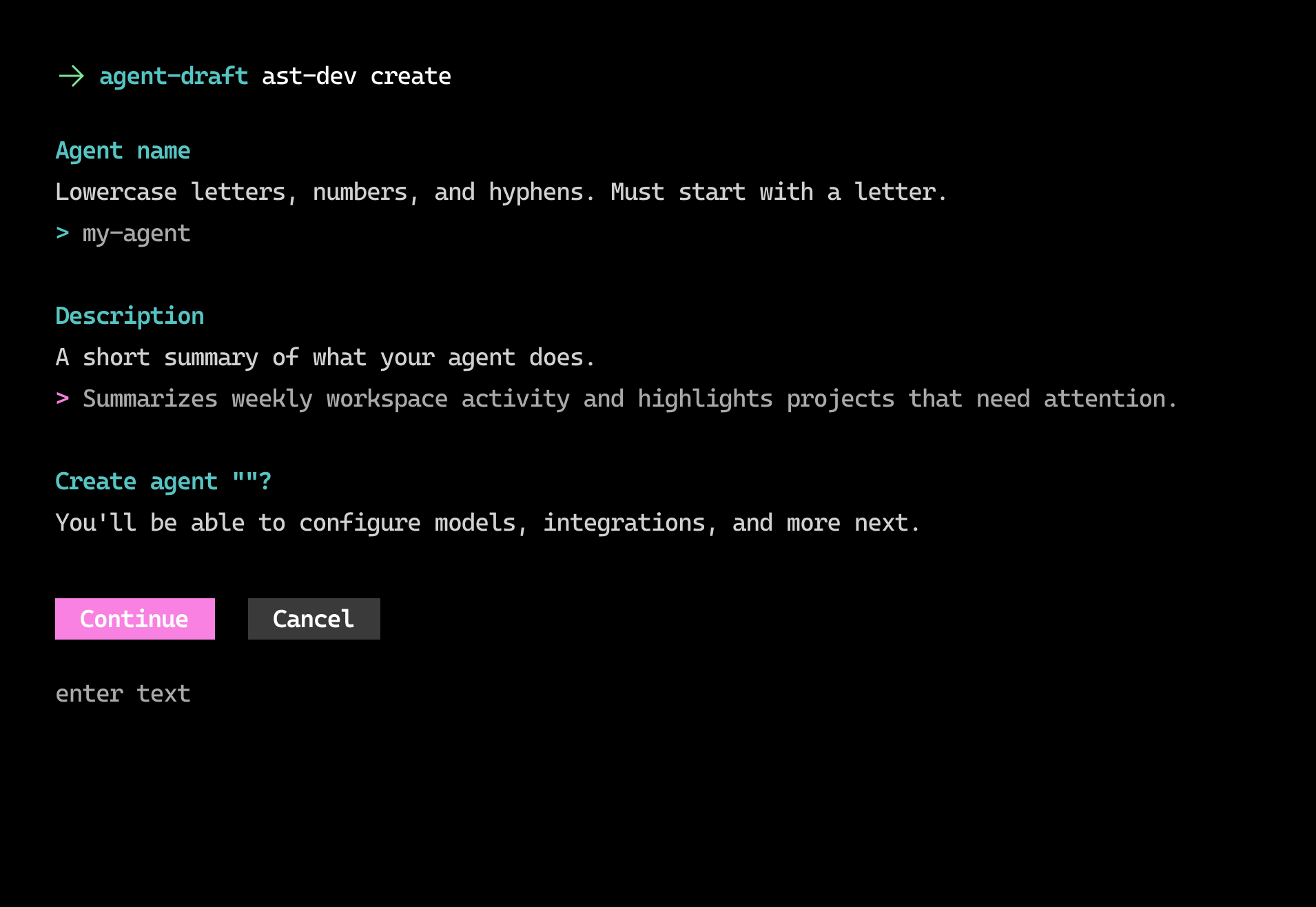

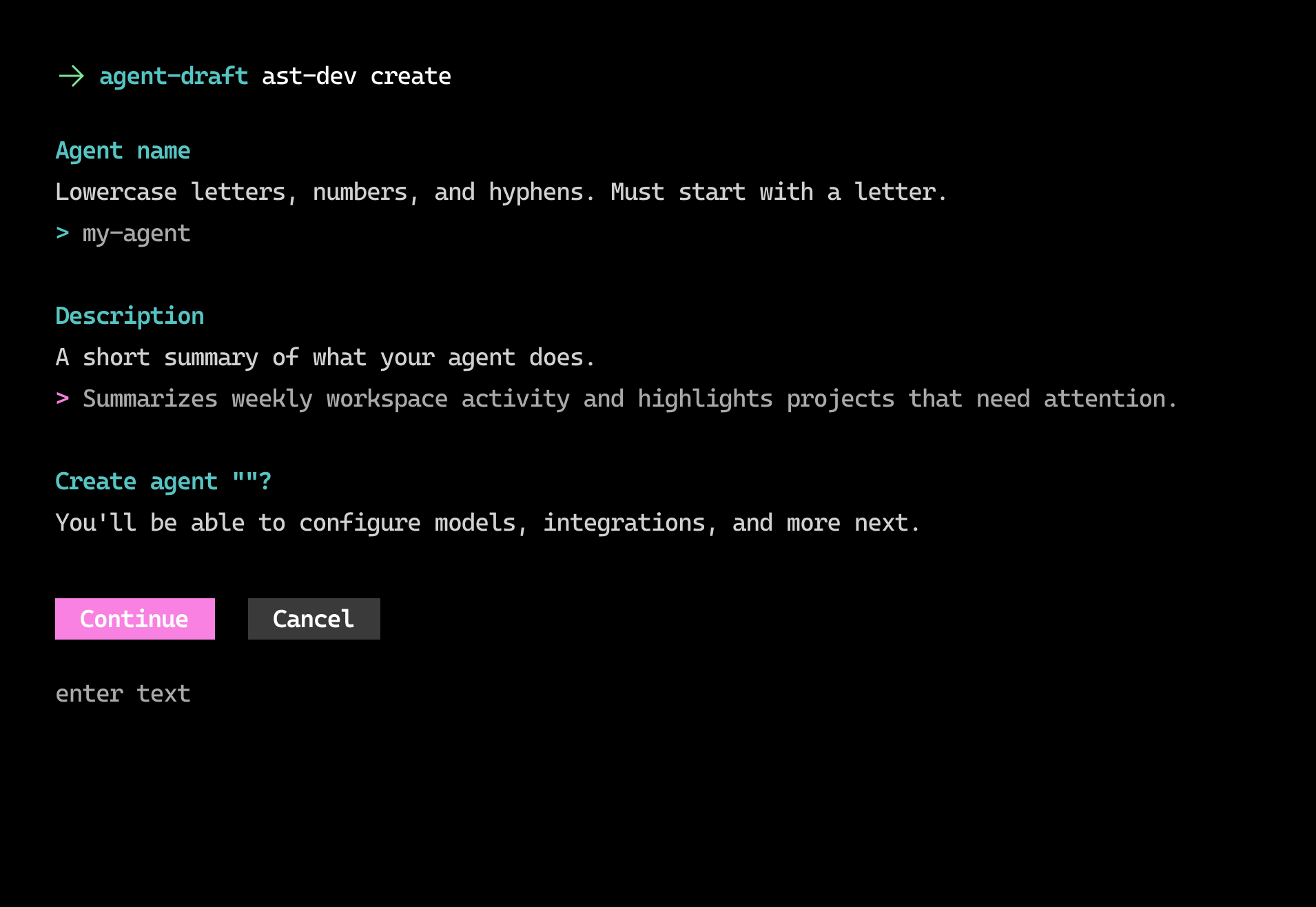

1. Create harness

One command generates the project structure and a starter Astro AI spec so your agent is fully specified as repeatable infrastructure.

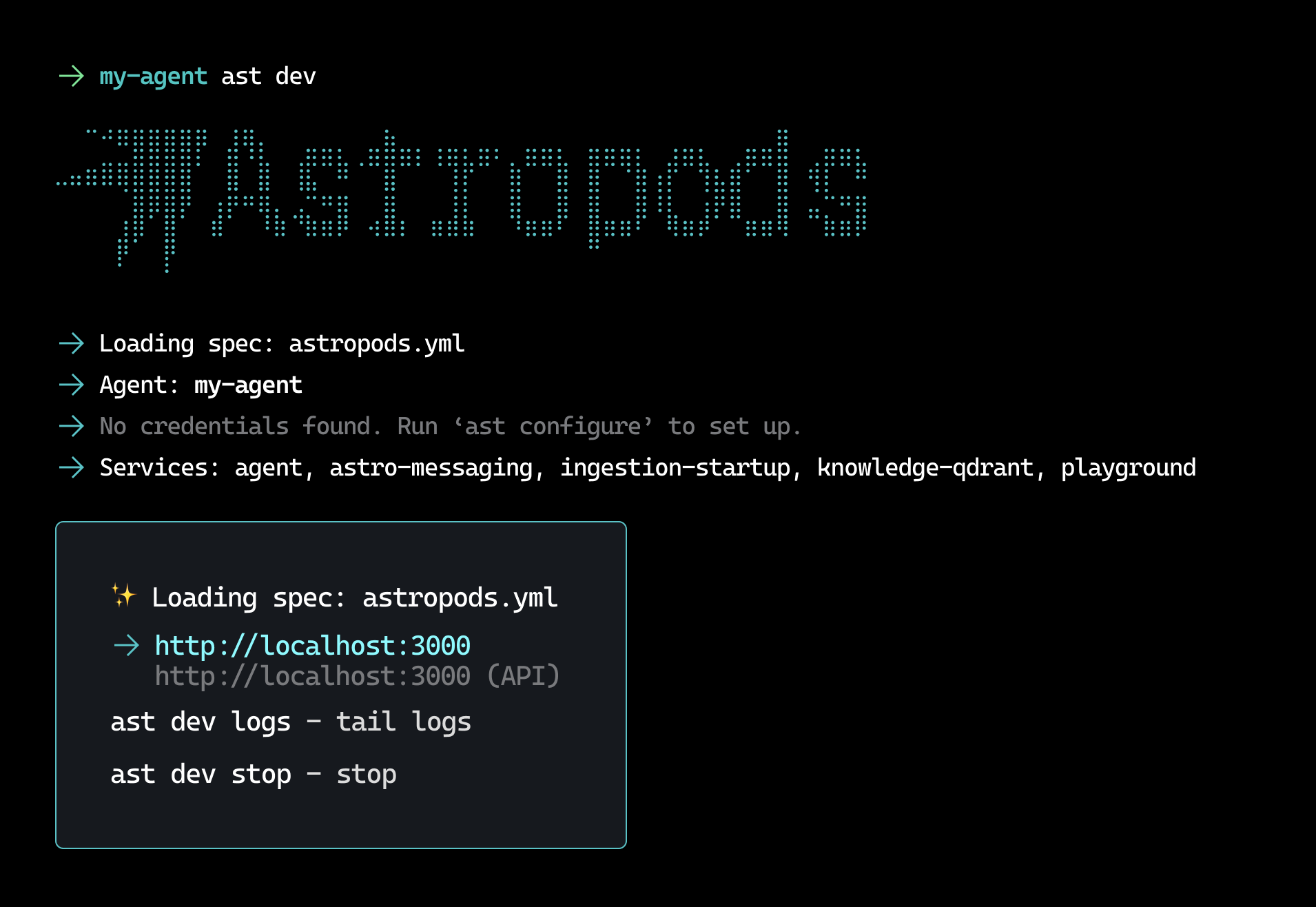

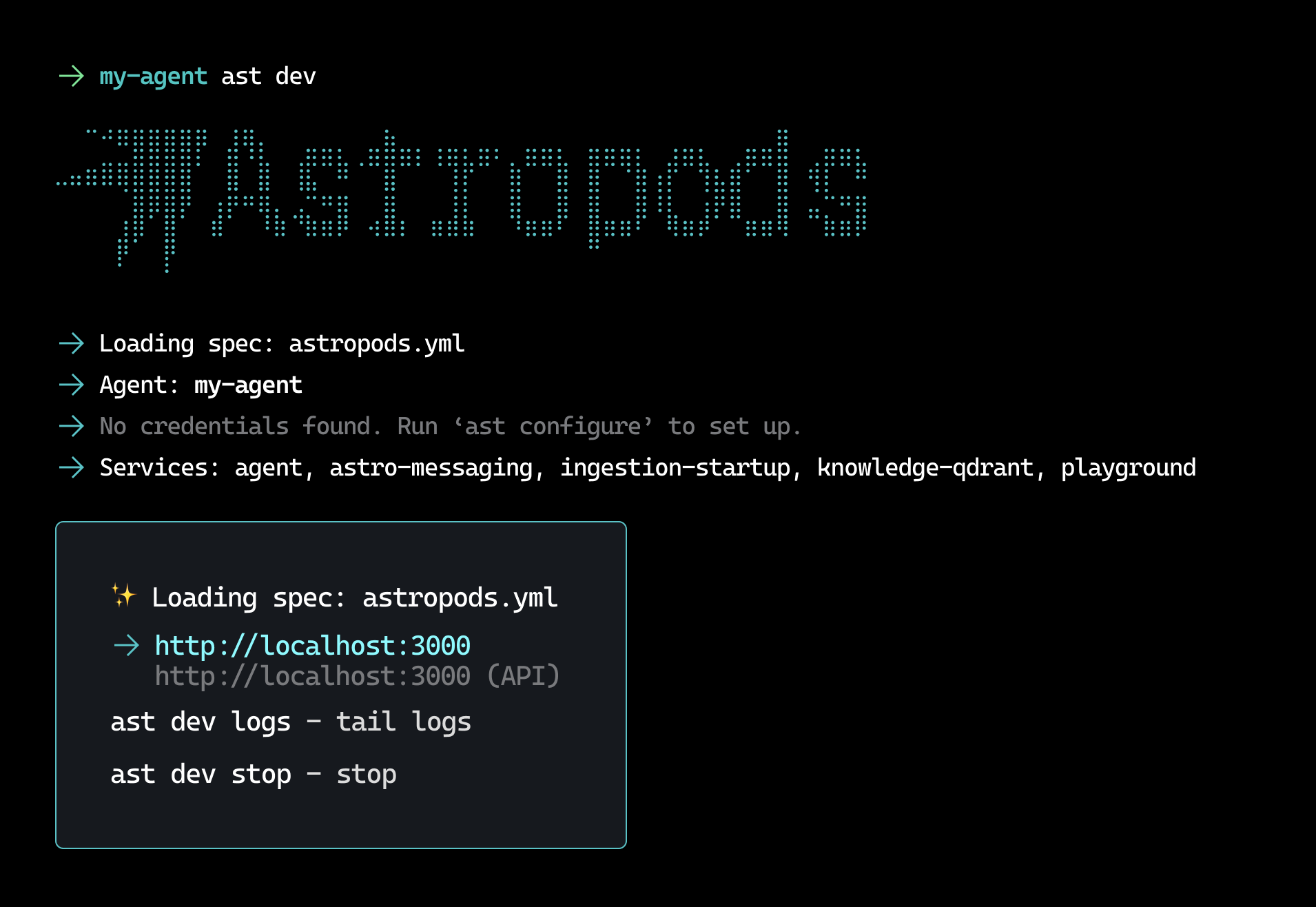

2. Develop locally

Run your agent locally with hot-reload. Test against live models and tools with built-in adapters for voice, text, Slack, and streaming interfaces.

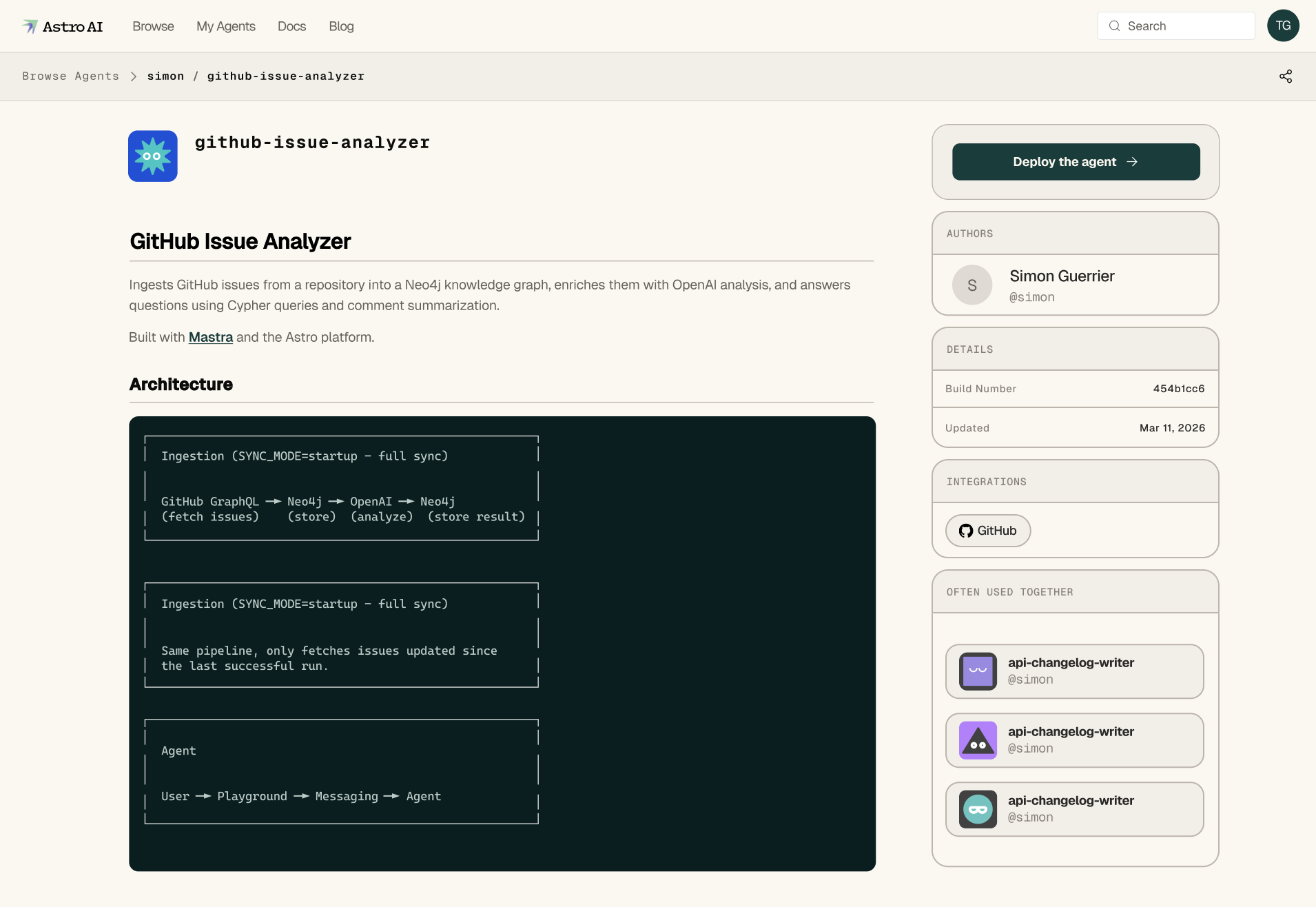

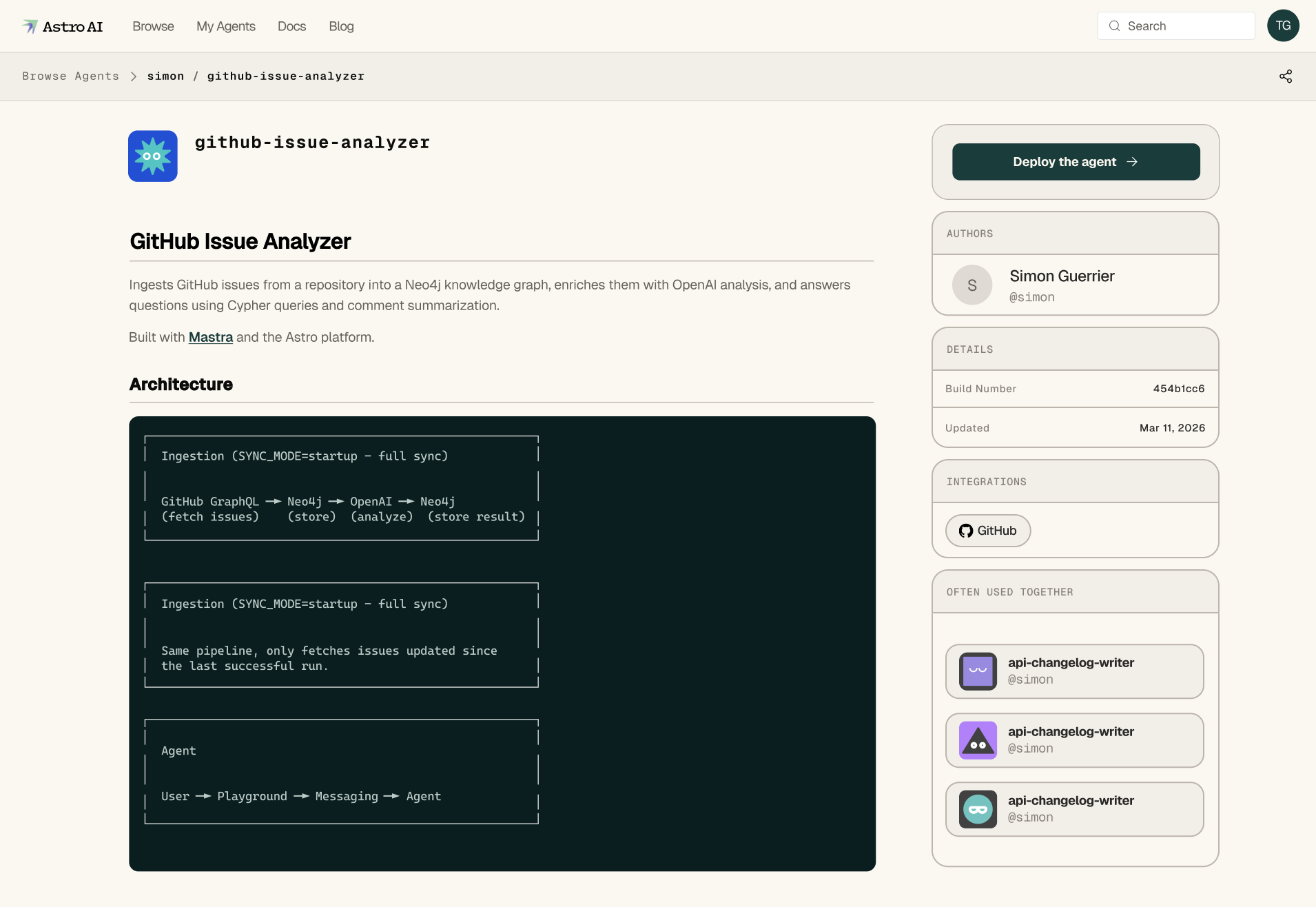

3. Share & deploy

Push to the Astro AI registry. Your agent is live with tracing, scaling, and versioning, available to others in your organization to use.

1. Create harness

One command generates the project structure and a starter Astro AI spec so your agent is fully specified as repeatable infrastructure.

2. Develop locally

Run your agent locally with hot-reload. Test against live models and tools with built-in adapters for voice, text, Slack, and streaming interfaces.

3. Share & deploy

Push to the Astro AI registry. Your agent is live with tracing, scaling, and versioning, available to others in your organization to use.

Built different

Not just another hosted runtime. Astro AI gives you control, flexibility, and independence at the infrastructure level.

Live updates, zero downtime

Change agent configurations, swap models, update guardrails, and adjust policies in production without redeploying. Your agents keep running while you iterate.

Framework-agnostic

Bring agents built with CrewAI, LangChain, Mastra, or your own custom framework. Astro AI doesn't lock you into a single SDK. It runs whatever you build.

Self-host or managed

Run Astro AI in your own cloud, on-prem, or use our managed platform. Your agents, your data, your infrastructure — with no vendor lock-in.

An open ecosystem

Build your agents using popular frameworks of your choice. Frameworks are adapted with a unified SDK to make using any framework as easy as a single import.

Built for real workloads

Real agents built and deployed by teams on Astro AI, complete with ingestion and inference engines. Because you can't deploy real agents without first building the right knowledge sources.

Searches and summarizes documentation across multiple sources with context-aware retrieval.

Reviews pull requests for bugs, security issues, and style violations with full repo context.

Generates and A/B tests email campaigns, landing page copy, and social content.

Triages and resolves support tickets end-to-end with knowledge base lookup and escalation.

Scores and qualifies inbound leads using CRM data, enrichment APIs, and custom criteria.

Monitors application logs in real-time, detects anomalies, and surfaces actionable insights.

Make your agent

your own

Every agent gets a verifiable identity. Track provenance, capabilities, and performance at a glance.

- Verifiable provenance and ownership

- Real-time performance metrics

- Capability tagging and tier classification

- Tamper-proof registry records

Apache 2.0 Licensed

Apache 2.0 LicensedOpen-source to the core

Transparent, extensible, community-driven.

Contribute & earn free compute

Build and share agents on the platform. Contributors earn free compute credits to run their agents in production.

Share with your team or the world

Deploy agents privately within your organization or publish them to the community for anyone to use and customize.

Join the community

Be part of a growing community of agent developers building, iterating, and shipping real AI agents together.

Build, deploy, and manage your agents.

Define agents as code. Deploy with a single command.